Frances Buontempo from BuontempoConsulting

git config --system http.sslverify falsewhich is asking for trouble. However you can install the certificate, so you don't need to keep doing this.

Frances Buontempo from BuontempoConsulting

git config --system http.sslverify falseDerek Jones from The Shape of Code

I date the age of the Algorithm from roughly the 1960s to the late 1980s.

During the age of the Algorithms, developers spent a lot of time figuring out the best algorithm to use and writing code to implement algorithms.

Knuth’s The Art of Computer Programming (TAOCP) was the book that everybody consulted to find an algorithm to solve their current problem (wafer thin paper, containing tiny handwritten corrections and updates, was glued into the library copies of TAOCP held by my undergraduate university; updates to Knuth was news).

Two developments caused the decline of the age of the Algorithm (and the rise of the age of the Ecosystem and the age of the Platform; topics for future posts).

Algorithms are still being invented and some developers spend most of their working with algorithms, but peak Algorithm is long gone.

Perhaps academic researchers in software engineering would do more relevant work if they did not spend so much time studying algorithms. But, as several researchers have told me, algorithms is what people in their own and other departments think computing related research is all about. They remain shackled to the past.

Timo Geusch from The Lone C++ Coder's Blog

Saw the announcement on on the GNU Emacs mailing list this morning. Much to my surprise, it’s also already available on homebrew. So my Mac is now sporting a new fetching version of Emacs as well :). I’ve been running the release candidate on several Linux machines already and was very happy with it, so […]

The post Emacs 26.1 has been released (and it’s already on Homebrew) appeared first on The Lone C++ Coder's Blog.

The Lone C++ Coder's Blog from The Lone C++ Coder's Blog

Saw the announcement on on the GNU Emacs mailing list this morning. Much to my surprise, it’s also already available on homebrew. So my Mac is now sporting a new fetching version of Emacs as well :). I’ve been running the release candidate on several Linux machines already and was very happy with it, so upgrading my OS X install was pretty much a no brainer. Here we go:Samathy from Stories by Samathy on Medium

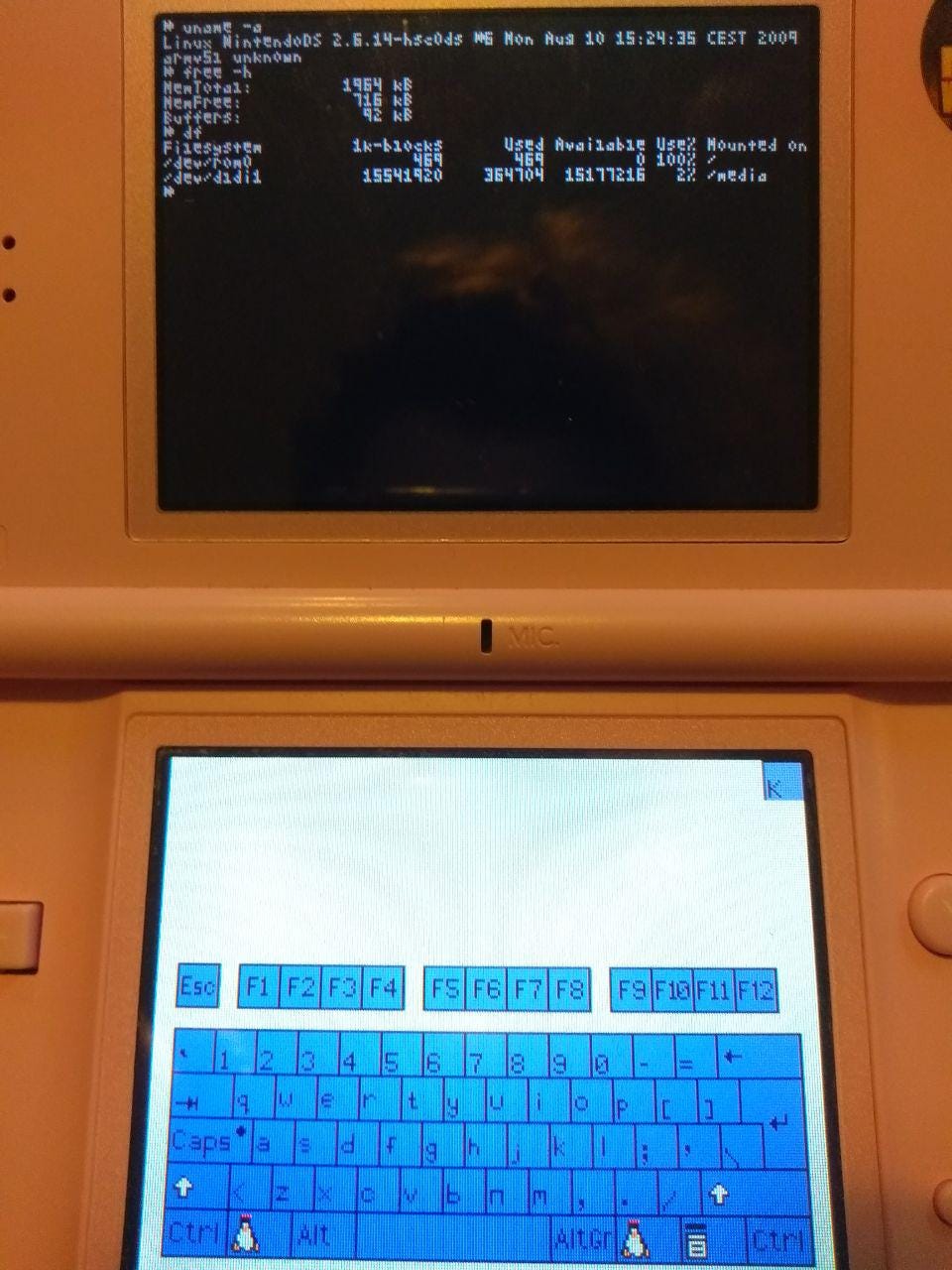

I recently bought a gorgeous pink Nintendo DSLite with the sole purpose of running DSLinux on it.

When I posted about my success on Mastodon , someone helpfully asked “Has it have any use tho?â€.

Lets answer that right away: Running Linux on a Nintendo DSLite is at best a few hours entertainment for the masochistic technologist, and at worst a waste of your time.

Running Linux on a Nintendo DSLite is at best a few hours entertainment for the masochistic technologist, and at worst a waste of your time.

But, I do rather enjoy running Linux on things that should not be running Linux, or at least attempting to do so. So heres what IÂ did!

DSLinux runs on a bunch of devices, luckily we had some R4 cards and an M3DS Real around the place which are both supported by DSLinux.

I purchased a SuperCard SD from Ebay to provide some extra RAM, which apparently is quite useful, since the DSLite has only 2mB of it on it’s own.The SuperCard SD I bought had 32mB extra RAM bringing the total up to some 34mB, wowee.

The first cards I tried were the R4 cards we had.

They’re popular and supported by DSLinux. Unfortunately, it seems the ones we’ve got are knockoffs and therefore proved challenging to find firmware for.

I spent a long while searching around the internet and trying various firmwares for R4 cards — None of them I tried did anything except show the Menu? screen on boot.

Finally, finding this post on GBATemp.net from a user with a card that looks exactly the same as mine lead me to give up on the R4 card and move on to the M3DS Real. Although the post did prove useful later.

It should be noted that the R4 card I had had never been tested anyway, so it might never have worked.

Another card listed as supported on the DSLinux site, so seemed a good one to try.

We had a Micro-SD Card in the M3Real anyway, with the M3 Sakura firmware on it so it seemed reasonable to just jump in there.

I copied the firmware onto another SD Card (because we didn’t want to loose the data on the original card). It was only 3 folders, SYSTEM, NDS and SKINS in the root of the card. The NDS file containing ‘games’.

In this case, I put the DSLinux files (dslinux.nds, dslinuxm.nds and ‘linux’, a folder) into the NDS folder and stuck it in my DSLITE.

After selecting DSLinux from the menu, I got the joy of….a blank screen.

Some forum posts which are the first results when searching the issue on DuckDuckGo suggest that something called DLDI is the issue.

The DSLinux ‘Running DSLinux’ does mention patching the ‘dslinux.nds’ file with DLDI if the device one is using doesnt support auto-dldi. At the time this was all meaningless jargon to me, since I’ve never done any Nintendo DS homebrew before.

Turns out, DLDI is a library that allows programs to “read and write files on the memory card inserted into one of the system’s slotsâ€.

Homebrew games must be ‘patched’ for whatever device you’re using to allow them to read/write to the storage device.

Most of the links on the DSLinux page to DLDI were broken, but we descovered the new home of DLDI and it’s associated tools to be www.chishm.com/DLDI/ .

I patched the dslinux.nds file using the linux command line tool and saw no change to the behaviour of the DSLite, still white screens.

Upon reading the DSLinux wiki page for devices a little closer, I noticed that the listing for the M3DS Real notes that one should ‘Use loader V2.7d or V2.8’.

What is a loader??

It means the card’s firmware/menu.

Where do I find it?

On the manufacturer’s website, or, bringing back the post mentioned earlier with the R4 card user on GBATemp.net, one can find lots of firmware’s for lots of different cards here: http://www.linfoxdomain.com/nintendo/ds/

Under the listing on the above site for ‘M3/G6 DS Real and M3i Zero’ one can find a link to firmware versions V2.7d and V2.8 listed as ‘M3G6_DS_Real_v2.8_E15_EuropeUSAMulti.zip’.

Upon installing this firmware to the SD Card (by copying ‘SYSTEM’ folder to the root of a FAT32 formatted card, I extracted the DSLinux files again (thus, without the DLDI patching I’d done earlier) and placed the files ‘dslinux.nds, ‘dslinuxm.nds’ and the folder ‘linux’ to an ‘NDS’ folder, also in the root of the drive.

This is INCORRECT.

Upon loading the dslinux.nds file through the M3DS Real menu it did indeed boot Linux, but dropped me into a single-user mode, with essentially no binaries in the PATH.

This is conducive to the Linux kernel having booted successfully, but not being able to find any userland. Hence the single-user and lack of programs.

Progress at least!

I re-read the DSLinux instructions and caught the clear mention to ‘Both of these must be extracted to the root directory of the CF or SD card.’ when talking about the DSLinux files.

Upon moving the DSLinux files to the root of the directory and starting ‘dslinux.nds’ from the M3DS Real menu I had a working Linux system!!

Notice the ‘DLDI compatible’ that pops up when starting DSLinux — That means that the M3DS Real auto-patches binaries when it runs them. Nice.

Probably trying to compile a newer kernel and userspace to start with.

Kernel 2.6, at time of writing, is 2 major versions out of date.

After that, I’d like to understand how DSLinux is handling the multiple screens and multiple processors.

The DS has an ARM7 and an ARM9 processor and two screens, which I think are not connected to the same processor, the buttons are split between the chips too.

Lastly, I’d like to write something for linux on the DS.

Probably something silly, but I’d like to give it a try!

Don’t ask me questions about DSLinux, I don’t really know anything more than what I’ve mentioned here. I just read some Wiki’s, solved some problems and did some searching.

Thanks to the developers of DSLinux and DLDI for making this silliness possible.

Chris Oldwood from The OldWood Thing

About 18 months or so ago I wrote a post about how I’d seen tests written that were self-reinforcing (“Tautologies in Testsâ€). The premise was about the use of the same production code to verify the test outcome as that which was supposedly under test. As such any break in the production code would likely not get picked up because the test behaviour would naturally change too.

It’s also possible to see the opposite kind of effect where the test code really becomes the behaviour under test rather than the production code. The use of mocking within tests is a magnet for this kind of situation as a developer mistakenly believes they can save time [1] by writing a more fully featured mock [2] that can be reused across tests. This is a false economy.

Example - Database Querying

I recently saw an example of this in some database access code. The client code (under test) first configured a filter where it calculated an upper and lower bound based on timestamps, e.g.

// non-trivial time based calculations

var minTime = ...

var maxTime = ...

query.Filter[“MinTimeâ€] = minTime;

query.Filter[“MaxTimeâ€] = maxTime;

The client code then executed the query and performed some additional processing on the results which were finally returned.

The test fixture created some test data in the form of a simple list with a couple of items, presumably with one that lies inside the filter and another that lies outside, e.g.

var orders = new[]

{

new Order { ..., Timestamp = “2016-05-12 18:00:00†},

new Order { ..., Timestamp = “2018-05-17 02:15:00†},

};

The mocked out database read method then implemented a proper filter to apply the various criteria to the list of test data, e.g.

{

var result = orders;

if (filter[“MinTimeâ€])

...

if (filter[“MaxTimeâ€])

...

if (filter[...])

...

return result;

}

As you can imagine this starts out quite simple for the first test case but as the production code behaviour gets more complex, so does the mock and the test data. Adding new test data to cater for the new scenarios will likely break the existing tests as they all share a single set and therefore you will need to go back and understand them to ensure the test still exercises the behaviour it used to. Ultimately you’re starting to test whether can actually implement a mock that satisfies all the tests rather than write individual tests which independently validate the expected behaviours.

Shared test data (not just placeholder constants like AnyCustomerId) is rarely a good idea as it’s often not obvious which piece of data is relevant to which test. The moment you start adding comments to annotate the test data you have truly lost sight of the goal. Tests are not just about verifying behaviour either they are a form of documentation too.

Roll Back

If we reconsider the feature under test we can see that there are a few different behaviours that we want to explore:

Luckily the external dependency (i.e. the mock) provides us with a seam which allows us to directly verify the filter configuration and also to control the results which are returned for post-processing. Consequently rather than having one test that tries to do everything, or a few tests that try and cover both aspect together we can separate them out, perhaps even into separate test fixtures based around the different themes, e.g.

public static class reading_orders

{

[TestFixture]

public class filter_configuration

...

[TestFixture]

public class post_processing

...

}

The first test fixture now focuses on the logic used to build the underlying query filter by asserting the filter state when presented to the database. It then returns, say, an empty result set as we wish to ignore what happens later (by invoking as little code as possible to avoid false positives).

The following example attempts to define what “yesterday†means in terms of filtering:

[Test]

public void filter_for_yesterday_is_midnight_to_midnight()

{

DateTime? minTime = null;

DateTime? maxTime = null;

var mockDatabase = CreateMockDatabase((filter) =>

{

minTime = filter[“MinTimeâ€];

maxTime = filter[“MaxTimeâ€];

});

var reader = new OrderReader(mockDatabase);

var now = new DateTime(2001, 2, 3, 9, 32, 47);

reader.FindYesterdaysOrders(now);

Assert.That(minTime, Is.EqualTo(

new DateTime(2001, 2, 2, 0, 0, 0)));

Assert.That(maxTime, Is.EqualTo(

new DateTime(2001, 2, 3, 0, 0, 0)));

}

As you can hopefully see the mock in this test is only configured to extract the filter state which we then verify later. The mock configuration is done inside the test to make it clear that the only point of interest is the the filter’s eventual state. We don’t even bother capturing the final output as it’s superfluous to this test.

If we had a number of tests to write which all did the same mock configuration we could extract it into a common [SetUp] method, but only if we’ve already grouped the tests into separate fixtures which all focus on exactly the same underlying behaviour. The Single Responsibility Principle applies to the design of tests as much as it does the production code.

One different approach here might be to use the filter object itself as a seam and sense the calls into that instead. Personally I’m very wary of getting too specific about how an outcome is achieved. Way back in 2011 I wrote “Mock To Test the Outcome, Not the Implementation†which showed where this rabbit hole can lead, i.e. to brittle tests that focus too much on the “how†and not enough on the “whatâ€.

Mock Results

With the filtering side taken care of we’re now in a position to look at the post-processing of the results. Once again we only want code and data that is salient to our test and as long as the post-processing is largely independent of the filtering logic we can pass in any inputs we like and focus on the final output instead:

[Test]

public void upgrade_objects_to_latest_schema_version()

{

var anyTime = DateTime.Now;

var mockDatabase = CreateMockDatabase(() =>

{

return new[]

{

new Order { ..., Version = 1, ... },

new Order { ..., Version = 2, ... },

}

});

var reader = new OrderReader(mockDatabase);

var orders = reader.FindYesterdaysOrders(anyTime);

Assert.That(orders.Count, Is.EqualTo(2));

Assert.That(orders.Count(o => o.Version == 3),

Is.EqualTo(2));

}

Our (simplistic) post-processing example here ensures that all re-hydrated objects have been upgraded to the latest schema version. Our test data is specific to verifying that one outcome. If we expect other processing to occur we use different data more suitable to that scenario and only use it in that test. Of course in reality we’ll probably have a set of “builders†that we’ll use across tests to reduce the burden of creating and maintaining test data objects as the data models grow over time.

Refactoring

While reading this post you may have noticed that certain things have been suggested, such as splitting out the tests into separate fixtures. You may have also noticed that I discovered “independence†between the pre and post phases of the method around the dependency being mocked which allows us to simplify our test setup in some cases.

Your reaction to all this may well be to suggest refactoring the method by splitting it into two separate pieces which can then be tested independently. The current method then just becomes a simple composition of the two new pieces. Additionally you might have realised that the simplified test setup probably implies unnecessary coupling between the two pieces of code.

For me those kind of thoughts are the reason why I spend so much effort on trying to write good tests; it’s the essence of Test Driven Design.

[1] My ACCU 2017 talk “A Test of Strength†(shorter version) shows my own misguided attempts to optimise the writing of tests.

[2] There is a place for “heavier†mocks (which I still need to write up) but it’s not in unit tests.

Frances Buontempo from BuontempoConsulting

I've been writing a batch file to run some mathematical models over a set of inputs.Products, the Universe and Everything from Products, the Universe and Everything

This is a recommended maintenance update for Visual Lint 6.5. The following changes are included:Products, the Universe and Everything from Products, the Universe and Everything

This is a recommended maintenance update for Visual Lint 6.5. The following changes are included:

Products, the Universe and Everything from Products, the Universe and Everything

This is a recommended maintenance update for Visual Lint 6.5. The following changes are included: