Allan Kelly from Allan Kelly Associates

Hi Allan, what do you think of this as a Spring Goal?

- Prototype store locator

- Deploy product selector to live

- Fix accessibility defects identified by client

- Complete visual design of search feature

- Security fixes & updates

- Team improvement: refactor VX tables, page template processor”

I answer:

“This looks more like a sprint backlog than a sprint goal.”

This e-mail exchange sums up the problem with the sprint goal, or rather, the sprint goal as it so often ends up being used.

The sprint goal has always been part of Scrum even if it has often been forgotten. The idea behind it was to say: “What is the outcome this team needs to make happen this sprint?” The goal was meant to be a non-trivial thing, a meaningful step forward, an outcome, perhaps a challenge, certainly a rallying point.

However the sprint goal fell into disuse. When I used to run teams I never used it – partly because my teams have never used strict Scrum but also because most of the teams I worked with had multiple things happening. The teams were expected to make progress across a broad front. Conversely the sprint goal focuses the team on a single thing.

My experience was far from unique. And, if I’m being honest, in the days when I gave agile training regularly I never talked about it much. Again, most of the teams I encountered were expected to “deliver stuff” it was more a case of “burning down the backlog.”

When I did see the sprint goal used it was normally used in reverse. Rather than teams setting a goal and asking “What do we need to do to make this happen?” teams would decide on a collection of stories from the backlog and then ask “What is the goal we can write that describes this collection of items?” In such cases the goal might as well be “Do stuff” or perhaps “Do the collection of stories we think we can do.”

The goal was meaningless so why bother?

Yet I detect a change in the air. In the last few years I’ve heard the sprint goal talked about more and I’ve observed teams setting a goal more often. Plus, as I wrote in Succeeding with OKRs in Agile, a sprint goal sits well with OKRs – it also provides a way to cut through the tyranny of the backlog.

Unfortunately I have to report the teams I see setting sprint goals are still setting goals about “Do these stories from the backlog.”

Why is this?

Perhaps it is because the sprint goal is misunderstood or perhaps it is because people are aiming to tick off as many Scrum practices as they can, maybe they feel they must use the goal because Scrum lists it.

I’m sure both of these reasons are at play but I think the main reason is because of backlog fetish and the expectation that teams “do the backlog.” Teams – and especially product owners – don’t have the skills or aren’t being given the authority to make decisions about what to do based on fresh information arriving from customers, analytics and analysis.

That is: most teams are still expected to burn-down the backlog.

Well, it is one way of working, I understand the logic, and burning-down a backlog with Scrum is probably still better than ticking off use cases from a requirements document in a waterfall; but it still leaves so much opportunity unrealised. Things could be so much better if teams really worked to sprint goals and OKRs rather than labouring under the tyranny of the backlog.

So if you want some practical advice: if you are setting sprint goals in reverse just give up, accept that you “do backlog items” and save yourself the time of inventing a goal.

And if you are not setting a sprint goal: have a serious talk about it as a team, examine what having a sprint goal would mean and how you might work differently. Then experiment with using a sprint goal for a few sprints.

This advice goes doubly if you are a Product Owner, seriously using sprint goals is going to relieve you of a lot of backlog administration but means you will need to think hard about goals and what will really improve your product.

Subscribe to my blog newsletter and download Continuous Digital for free

(normal price $9.99/£9.95/€9.95)

The post The Sprint Goal? appeared first on Allan Kelly Associates.

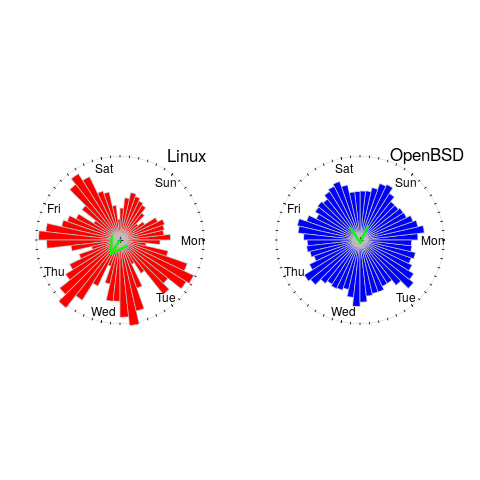

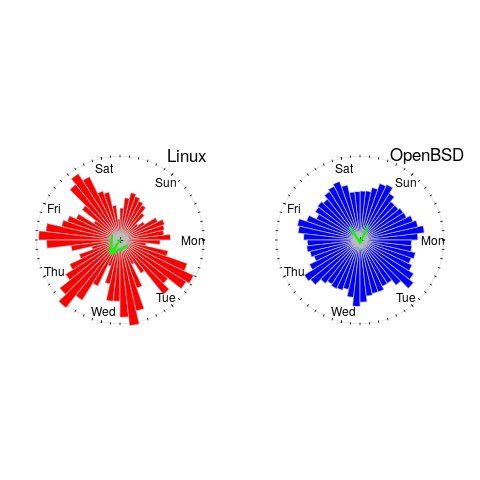

, where

, where  is the mean length.

is the mean length.

, where

, where  is the mean length.

is the mean length.